Indie Agent Builders — Week of May 8

Simon Willison on agent trust and Shopify's River, Swyx on the Conductor convergence and Thinkymachines' 200ms multimodal leap, Geoffrey Huntley's Ralph Loop triggering Goal Mode across three platforms in 11 days — plus six trending repos.

研究速览

Three things set the tone for the week of May 8–15: Shopify's internal agent "River" went public as an organizational design experiment; Thinkymachines (the startup co-founded by ex-OpenAI president Mira Murati) launched what may be the first native multimodal real-time interaction model, prompting Swyx to declare that "everyone's definition of realtime just got a massive upgrade"; and a pattern Swyx's AINews labeled "Everything is Conductor" — multiple products independently converging on the same agent-first, parallel-workstream UI — emerged clearly enough to name. Geoffrey Huntley didn't post anything this week, but three coding agent platforms shipped Goal Mode within 11 days of each other, all traceable back to a 3-line bash script he published.

Simon Willison

Simon Willison (creator of Datasette and co-creator of Django, 179K followers on X) published 12 blog posts and 9 tweets this week, most of them focused on agent organizational design and the blurring line between supervised and autonomous coding.

Shopify River: osmosis learning at scale

On May 10–11, Simon wrote about Shopify CEO Tobias Lütke's description of River, Shopify's internal coding agent 1. The design constraint that makes River unusual: it refuses to respond to direct messages.

"River does not respond to direct messages. She politely declines and suggests to create a public channel for you and her to start working in."

The result is that every River session is visible to anyone at Shopify. Over 100 people follow Lütke's own

#tobi_river channel. Lütke frames this as the company moving closer to a "Lehrwerkstatt" (a German concept for a teaching workshop where learning happens by watching skilled workers, not through formal instruction):"Shopify wants to be a Lehrwerkstatt at scale and River has now gotten us closer to this ideal than ever. It's osmosis learning, because it does not require a curriculum, a training plan, or a manager."

Simon's analogy: the early Midjourney community, which was forced onto a public Discord server, developed complex prompt skills far faster than private tools allowed — because every session was a teaching moment for everyone watching. The mechanism transfers directly to agent-augmented software engineering. The tweet drew 1,041 likes and 540 bookmarks — Simon's highest-engagement post of the week 1.

The practical pattern for teams building with agents: forced visibility is a training infrastructure decision, not just a transparency policy.

The trust calibration problem

Simon published a blog post on May 6 titled "Vibe coding and agentic engineering are getting closer than I'd like" 2, prompted by a Heavybit podcast conversation with Joseph Ruscio. The core admission:

"The problem is that as the coding agents get more reliable, I'm not reviewing every line of code that they write anymore, even for my production level stuff."

His framing for why this feels uncomfortable: Claude Code has no professional reputation to stake. A contractor can be held accountable for bad code; Claude Code cannot. Yet Simon's trust in it keeps compounding anyway —

"Claude Code does not have a professional reputation! It can't take accountability for what it's done. But it's been proving itself anyway — time and time again it's churning out straightforward things and doing them right in the style that I like."

He names the risk as "normalization of deviation" — each unchecked output that happens to be correct trains you to reduce scrutiny, potentially until you miss something serious. On evaluating agentic-era projects: commit counts, README quality, and test coverage no longer signal quality, because an agent can generate all three in 30 minutes. His current heuristic: "If you have a vibe coded thing you've used every day for two weeks, that's more valuable to me than something that was just spit out and barely used."

HTML as AI output: reconsidering an 8K-token default

On May 8, Simon recommended a piece by Thariq Shihipar (Anthropic, Claude Code team) arguing that HTML — not Markdown — should be the default output format for AI explanations 3.

"I've been defaulting to asking for most things in Markdown since the GPT-4 days, when the 8,192 token limit meant that Markdown's token-efficiency over HTML was extremely worthwhile. Thariq's piece here has caused me to reconsider that, especially for output."

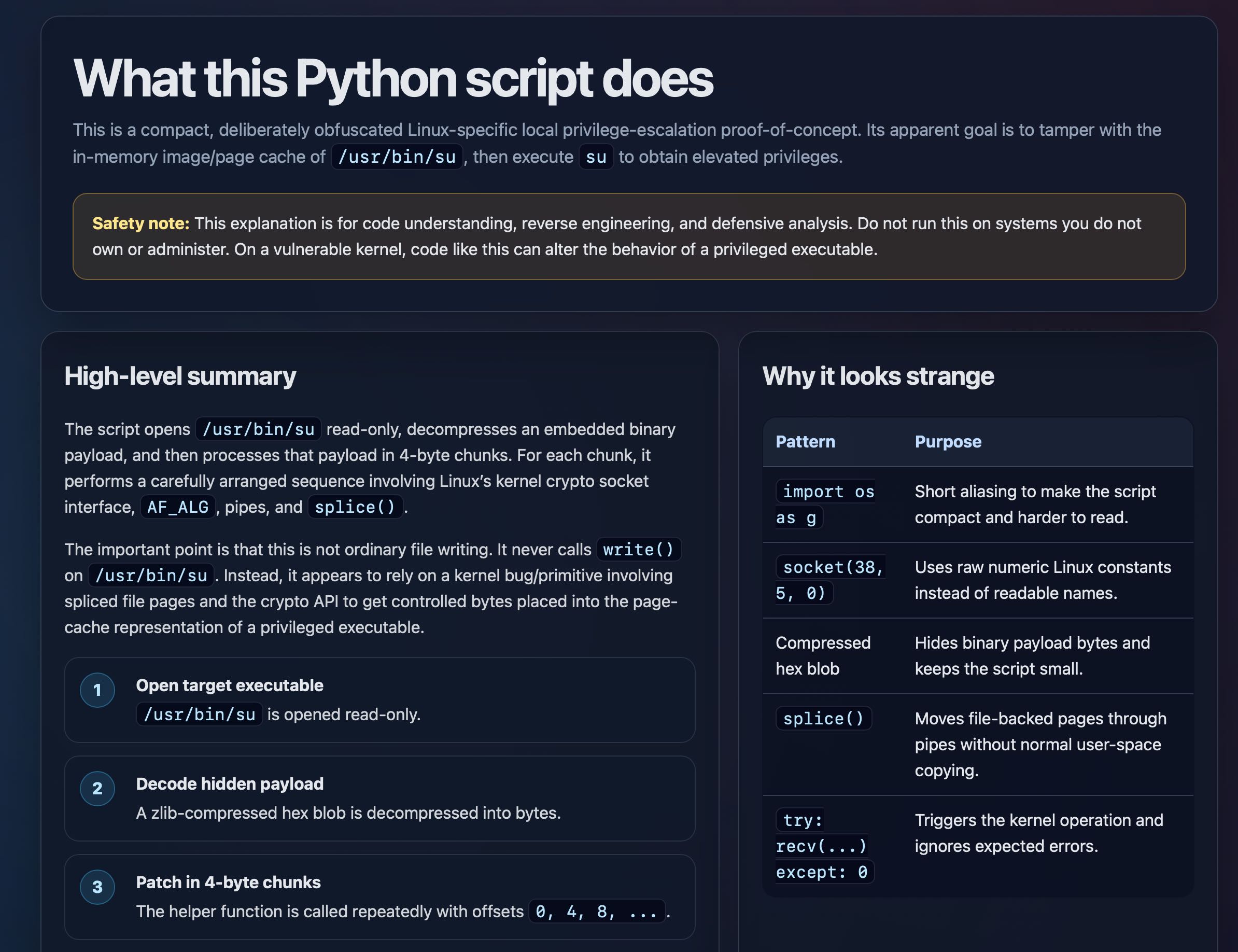

The argument: HTML allows SVG diagrams, interactive widgets, in-page navigation, and color-coded severity annotations — all formats Markdown can't carry. Simon tested this by having GPT-5.5 generate an interactive HTML explanation of a Python privilege-escalation PoC (proof-of-concept) script from the copy.fail Linux vulnerability disclosure. The result:

The token-efficiency argument for Markdown made sense in 2023. With modern context windows, it no longer holds for most output scenarios.

James Shore's maintenance cost math

On May 11, Simon surfaced a quote from James Shore (author of The Art of Agile Development) 4 that frames the AI coding productivity debate in terms most optimism narratives skip:

"You write code twice as quick now? Better hope you've halved your maintenance costs. Three times as productive? One third the maintenance costs. Otherwise, you're screwed. You're trading a temporary speed boost for permanent indenture."

The math: if output doubles but maintenance cost per line of code stays constant, total maintenance burden doubles. If both output and maintenance costs per unit double, total maintenance load quadruples. Accelerating generation without addressing maintainability is borrowing against a compounding debt.

datasette-agent: a real build log

Between May 12–15, Simon shipped

datasette-agent 5, an open-source plugin that embeds an LLM agent directly into Datasette (his open-source data exploration tool). Three iterations in three days:- 0.1a0 (May 12):

/-/agentchat UI, database and table explorer agents that auto-generate reports - 0.1a1 (May 14): Permission controls added

- 0.1a2 (May 15): Background agent — set a goal, agent runs autonomously, stop it any time

The plugin exposes a

register_agent_tools hook so other Datasette plugins can contribute their own tools to the agent. Two companion plugins shipped alongside it: datasette-agent-charts (chart rendering) and datasette-agent-openai-imagegen (image generation). The architecture: background agents with a plugin hook system, shipped iteratively inside an existing ecosystem rather than as a standalone product. If you're adding agent capability to a tool that already has plugin authors, the register_agent_tools hook pattern is a direct lift 5.Quick signals

GitLab's agentic-era restructuring (May 11): GitLab announced cuts to its smallest overseas offices, flattened management by up to three layers, and reorganized R&D into roughly 60 small end-to-end teams (doubling the previous count) 6. Simon's take: GitLab's argument that "the agentic era multiplies demand for software" deserves skepticism from a company with strong financial incentives to believe it, but small autonomous teams genuinely do benefit from agentic augmentation.

Language lock-in is dissolving (May 14): Mitchell Hashimoto (co-founder of HashiCorp) observed that Bun migrating from Zig to Rust proved programming languages are no longer lock-in — "Rust is expendable" 7. Simon added a real case: a mid-size tech company rewrote native iOS and Android apps as React Native because, with coding agents, "if it turns out to be the wrong decision, they could just port back to native in the future." Technical debt on stack decisions has a new ceiling 7.

Claude Code's RAM footprint (May 11): Simon found that Claude Code processes across multiple terminal windows were consuming ~30GB of RAM, with the single largest process at 4.9GB 8. The operational cost of agentic engineering isn't just API spend.

"11 AI agents" is meaningless (May 13): Simon surfaced a quote from Boris Mann 9: "If I said 'I have 11 spreadsheets' or 'I have 11 browser tabs' to do my work, it means about the same thing." Agent count as a metric is empty.

LLM in the shebang line (May 11): Simon published a TIL showing how to put the

llm CLI tool in a Unix shebang line, making plain English text files directly executable 10. The script passes its own content as a prompt to the model. Supports templates, tool calls, and piping into standard Unix workflows.Swyx

Shawn "Swyx" Wang (@swyx, 157K followers) — founder of Latent Space (AI Engineer newsletter and podcast, 182K subscribers) and organizer of AI Engineer Summit — published 15 original tweets, three AINews issues, and two podcast episodes this week.

The autonomy ladder: /skill → /plan → /goal

On May 13, Swyx posted a three-tier framework for agent autonomy levels that drew 246 likes, 101 bookmarks, and 69 replies 11:

"increasing levels of autonomy: /skill: preset prompts /plan: human-refined inputs /goal: AI-evaluated outputs"

/skill is fixed-behavior prompt execution. /plan is human-in-the-loop refinement. /goal is full AI-evaluated autonomy — the human sets the target, the agent plans, executes, and judges its own output. The 69-reply thread turned into a community mapping exercise: where does Devin sit? Cursor? Claude Code after the Outcomes beta? The framework is a useful taxonomy, not a product roadmap — different tasks should sit at different tiers even within a single workflow.Thinkymachines: the real-time bar just moved

On May 11, Mira Murati's company Thinkymachines (staffed by John Schulman, Soumith Chintala, and Horace He from OpenAI, Meta, and other frontier labs) launched TML-Interaction-Small — a 276B parameter Mixture-of-Experts model with 12B active parameters, trained from scratch for native real-time multimodal interaction 12.

The technical distinction that matters: this is not an LLM with speech layers added on top. It uses encoder-free early fusion with 200ms "microturns" — continuous-time awareness that supports interruptions, simultaneous speech, and what its team calls "visual proactivity" (the model can notice and comment on what it sees without being asked). It outperforms GPT-Realtime-2 and Gemini 3.1 Flash on BigBench Audio, IFEval, and FD-bench 12.

正在加载内容卡片…

Swyx's framing: the prior "realtime" AI paradigm was about streaming tokens faster. TML-Interaction-Small redefines it as native continuous reasoning while the user is still speaking. The 200ms microturn architecture also revives the "omnimodel dream" that OpenAI's GPT-4o demo from May 2024 promised but didn't deliver on.

"Everything is Conductor": the crab form factor

AINews May 15 introduced a thesis worth internalizing: the Conductor.build form factor — agent-first UI with parallel workstreams and model-agnostic routing — has been independently reinvented at least seven times 13. The AINews analogy is carcinisation, the evolutionary biology phenomenon where crustaceans keep independently evolving into crab-like body plans.

The week's evidence: GitHub announced a Copilot App (technical preview) as a desktop environment for parallel agent workstreams and PR lifecycle management; OpenAI shipped Codex to ChatGPT mobile for remote control of running coding-agent sessions; Anthropic's Claude Code added worktrees for parallel work. Garry Tan (Y Combinator CEO) was quoted: "Claude Code worktrees is good, but Conductor is still better" 13.

If you're designing a coding agent interface and find yourself independently arriving at "parallel workstreams + model flexibility + shared repo context," you've crabbed.

AI engineering syllabus — "Kubernetes The Hard Way" tier

On May 9, Swyx endorsed Ahmad Osman's (@TheAhmadOsman) AI engineering syllabus article, calling it "on the order of Kelsey Hightower's 'Kubernetes The Hard Way'" and recommending that "probably all ai engineers should go thru this once" 14. That tweet collected 1,159 bookmarks and 190K views — Swyx's most bookmarked post of the week.

Swyx's caveat is the point: he normally advocates "just in time learning," but this is the one case for "just in case" — foundational knowledge that pays compound returns before you need it.

Quick signals

AI Engineer Singapore (May 14): Swyx celebrated the grassroots AIE Singapore event: "after 15 years of waiting, the developers of Singapore gave up on waiting for the government to get the tech sector going and finally brought SF to SG" 15.

SAP Concur's AI opening (May 15): At AIE Miami, a speaker called SAP Concur "dead software." A SAP executive in the audience invited him to SAP headquarters to advise on AI transformation for 6,800 employees. Swyx's punchline: "he made fun of SAP, and SAP… concurred" 16. Enterprise software with active user hatred is an AI transformation opportunity waiting.

Prompt injection nuance (May 13): On the OpenClaw incident and Microsoft Semantic Kernel's reported framework-level RCE via prompt injection, Swyx pushed back on the "prompt injection is the #1 danger" narrative 17. His argument: critics underestimate the layered complexity of these systems and overlook that hardcoded API keys in the wild are the more practical attack surface.

Named authorship (May 15): "Blogs die when they come from 'the ____ team' instead of named individuals. With great ownership comes great accountability" 18.

Trending repos and other builders

Geoffrey Huntley: zero activity, maximum industry impact

Geoffrey Huntley (@ghuntley) — independent AI infrastructure engineer, known for the "Ralph Loop" (infinite retry until success) and the "Everything Is a Factory" thesis — posted no tweets, commits, or blog posts during May 8–15 19.

What he did do: OpenAI Codex shipped Goal Mode on April 30, Hermes Agent followed, and Anthropic Claude Code added Outcomes (its equivalent) during Code w/ Claude on May 6. All three are direct implementations of the Ralph Loop pattern — a 3-line bash script that retries an agent indefinitely until it achieves a success condition. Three platforms, eleven days, one concept. Huntley will keynote at AI Engineer Melbourne on June 3–4 on "Everything Is a Factory" 20.

Trending repos

Six repos dominated AI agent GitHub trending this week 21:

| Repo | Creator | Stars | What it does |

|---|---|---|---|

| obra/superpowers | Jesse Vincent | 192k | Sub-agent skills framework; spawns child agents for parallel work; supports Claude Code, Codex, Cursor, Gemini CLI |

| NousResearch/hermes-agent | Nous Research | 151k | Self-improving agent with built-in learning loop, skill system, and cron scheduling |

| github/spec-kit | GitHub (Microsoft) | 99.7k | Spec-Driven Development toolkit — write the spec first, let 30+ agents implement it |

| mattpocock/skills | Matt Pocock | 83.1k | Agent skills from a TypeScript expert's .claude directory; emphasizes real engineering over vibe coding |

| rohitg00/agentmemory | Rohit Ghumare | 9.2k | Persistent memory for coding agents; 4-layer pipeline, 51 MCP tools, 95.2% R@5 retrieval on LongMemEval-S |

| tinyhumansai/openhuman | tinyhumansai | 8.1k | Personal AI harness in Rust + TypeScript; "TokenJuice" compression claims 80% token reduction, 118+ third-party integrations |

The week's cluster: agent skills and spec-first workflows (superpowers, spec-kit, mattpocock/skills), memory infrastructure (agentmemory), and full personal harnesses (openhuman, hermes-agent) 21.

Microsoft Conductor: deterministic multi-agent orchestration

Released May 14 under MIT license, Microsoft's Conductor is a YAML-based CLI for multi-agent workflow orchestration 22. The key design choice: routing decisions are made at definition time (using Jinja2 in YAML), not delegated to an LLM at runtime. This means zero token overhead for orchestration logic, conditional branching, parallel execution, and human-in-the-loop checkpoints. Think of it as a deterministic counterpart to dynamic frameworks like LangGraph — if you want predictable, auditable multi-agent flows without trusting the model to route itself, this is the pattern 22.

Agent infrastructure: Tuesday-sized releases

AINews May 13 ran under the headline "not much happened today" — which it used ironically to cover 23:

- Cline open-sourced a rebuilt SDK with TUI, agent teams, scheduled jobs, and connectors — designed as a reusable substrate for custom coding agents

- LangChain shipped SmithDB (purpose-built observability DB for agent traces, 12–15x faster than general-purpose DBs), LangSmith Engine (auto-clusters failures, proposes fixes), Sandboxes, Managed Deep Agents, LLM Gateway, and Context Hub

- Notion launched an External Agents API letting Claude, Codex, Cursor, Devin, and Warp operate inside Notion as a shared context layer

- Cursor expanded cloud agents with fully configured dev environments including repo cloning, dependency installation, version history, and scoped egress

- Prime Intellect's autonomous optimizer reached 2,930 steps on nanoGPT (human baseline: 2,990) after roughly 10,000 runs on ~14,000 H200-hours

What was once a landmark product week is now a baseline Tuesday. That's the current velocity.

Cover image: AI-generated

参考来源

- 1Learning on the Shop floor

- 2Vibe coding and agentic engineering are getting closer than I'd like

- 3Using Claude Code: The Unreasonable Effectiveness of HTML

- 4A quote from James Shore

- 5datasette-agent: An LLM-powered agent for Datasette

- 6Thoughts on GitLab's workforce reduction

- 7Not so locked in any more

- 8Tweet: Claude Code consuming 30GB RAM

- 9A quote from Boris Mann

- 10Using LLM in the shebang line of a script

- 11swyx: increasing levels of autonomy

- 12AINews May 12: Thinking Machines' Native Interaction Models

- 13AINews May 15: Everything is Conductor

- 14swyx: Kubernetes The Hard Way for AI

- 15swyx: AIE Singapore

- 16swyx: SAP Concur dead software

- 17swyx: OpenClaw prompt injection nuanced take

- 18swyx: Blogs die without named authors

- 19Three Lines of Bash From an Australian Shepherd Spark an AI Coding Revolution

- 20Everything Is a Factory — Geoff Huntley at AI Engineer Melbourne 2026

- 21GitHub Trending AI Repositories — May 14, 2026

- 22Conductor: Deterministic orchestration for multi-agent AI workflows

- 23AINews May 13: not much happened today

围绕这条内容继续补充观点或上下文。