Seven founder essays worth reading before your next strategy session

Close reads of seven long-form essays by Graham, Altman, Gil, Jassy, Karp, McCormick & Gabriele — distilled into decisions that matter for early-stage AI founders.

Seven long-form pieces landed on blogs, Substacks, and investor relations pages across Silicon Valley between early March and mid-May 2026. The authors range from a co-founder of Y Combinator to the CEO of a $200B-capex infrastructure company to a newsletter writer who now spends most of his working hours inside a terminal. Their concerns span the philosophical (what does differentiation even mean when AI can replicate almost anything?) to the brutally operational (how many employees should you actually have when revenue triples?). What follows is a close read of each piece, with the arguments stripped down to what an early-stage AI founder can act on.

Paul Graham: when technology kills your product edge

Published: March 5, 2026 · The Brand Age

Author: Paul Graham is a co-founder of Y Combinator (the seed fund behind Airbnb, Stripe, Dropbox, and hundreds of others) and the writer of an essay canon that has shaped how a generation of founders think about product and markets.

Core argument: Brand is the residue that remains after technology makes product differences disappear. 1 Graham uses the Swiss watch industry as a near-perfect case study. From 1945 to 1970 — the "golden age" — Swiss watchmakers competed on thinness and accuracy. 1 Then quartz arrived, wiped out two-thirds of Swiss watch unit sales, and left only three companies standing as independent firms: Patek Philippe, Audemars Piguet, and Rolex. 1 The rest became what Graham calls "zombie brands" inside six holding companies. Patek Philippe survived not by building a better watch, but by pivoting fully to brand-driven luxury positioning from 1987 onward. 1 Its revenue trajectory turned sharply upward only after that pivot. The brand's execution is now almost comically elaborate: buyers must spend years buying lower-tier models before qualifying for the Nautilus waiting list, and Patek monitors secondary markets, buys back hundreds of its own watches annually to trace serial numbers, and cuts off retailers whose customers flip watches. 1 Graham calls this "carefully managing a sustained asset bubble."

The structural tension Graham identifies is this: "Brand is what's left when the substantive differences between products disappear. But making the substantive differences between products disappear is what technology naturally tends to do." 1 He names the deeper conflict: "Branding is centrifugal; design is centripetal." 1 Good design converges on right answers; branding demands distinctiveness. The two can only coexist when the design space is either enormous (painting, literature) or still largely unexplored.

Quality doesn't stop mattering when a market goes brand-driven — it just changes role. It becomes a minimum threshold rather than a differentiator. A Patek Philippe watch must keep accurate time well enough to maintain the brand's credibility, even though quartz is far more accurate. The brand "must not break character." 1

Graham's prescription for founders who don't want to play the brand game: "Follow the problems." 1 The way to find a golden age — the period before technology commoditizes a market — is to stay close to genuinely hard, unexplored problems. "If you're smart and ambitious and honest with yourself, there's no better guide than your taste in problems." 1

What this means for your decisions:

If you're building an AI product in a category where the underlying model capability is already commoditizing (coding assistants, writing tools, image generation), the watch industry playbook is already visible. The companies that survive will either have built a genuine technological edge that hasn't yet commoditized, or have already begun building brand moats through distribution, community, and workflow lock-in. Ask yourself: which of your current product advantages depends on model capability that a competitor can replicate in six months? Those advantages are the quartz crisis arriving early. The safer bets are the ones tied to proprietary data, workflow integration, or a customer relationship your competitors can't buy.

Sam Altman: the ring of power and the infrastructure bet

Published: April 10, 2026 · Personal blog

Author: Sam Altman is CEO of OpenAI (developer of GPT-4, ChatGPT, and now Sora), which he co-founded and which he describes as a "superintelligence research company" first.

Core argument: Altman published a sprawling 12,000-word manifesto in response to a New Yorker investigative profile and a Molotov cocktail attack on his home. 2 The post is structured around five personal beliefs, a "ring of power" analysis of AI industry dynamics, the Sora 2 launch, and an infrastructure vision.

The five beliefs form a coherent frame: 2

- Advancing science and technology and empowering all people are moral obligations.

- AI will be the most powerful tool for expanding human capability in history; demand is essentially uncapped.

- Fear and anxiety about AI are justified — safety requires society-wide resilience, not only model alignment.

- AI must be democratized; power cannot concentrate. "I do not think it is right that a few AI labs would make the most consequential decisions about the shape of our future." 2

- Adaptability is non-negotiable — beliefs will change as technology and society evolve.

The "ring of power" section is the most analytically interesting. Altman borrows from Tolkien to explain why the AI industry generates intense, irrational competition: "Once you see AGI you can't unsee it. It has a real 'ring of power' dynamic to it, and makes people do crazy things." 2 His proposed solution is broad technology distribution — nobody should hold the ring. He adds a political condition: "It is important that the democratic process remains more powerful than companies." 2

On infrastructure, Altman states a specific goal: "We want to create a factory that can produce a gigawatt of new AI infrastructure every week." 2 He frames compute as the literal key to revenue growth — a self-reinforcing flywheel. The example he gives for why scale matters: with 10 gigawatts of compute, AI could either cure cancer or deliver personalized tutoring to every student on Earth. "If we are limited by compute, we'll have to choose which one to prioritize; no one wants to make that choice, so let's go build." 2

His timeline signals are the most actionable data points for founders: 2025 should bring agents capable of real cognitive work; 2026 may bring systems capable of novel scientific insights; 2027 may bring robots that can execute real-world tasks. 2 His destination: "Intelligence too cheap to meter is well within grasp." 2

What this means for your decisions:

Altman's timeline, if roughly accurate, compresses your planning horizon. If AI agents can do real cognitive work now and novel scientific insights by 2026, the window for products that help people get more from AI agents is already open — and closes faster than most founders assume. The more counterintuitive signal is in the democratization argument: if Altman is right that the "ring of power" dynamic drives irrational behavior at every lab, then the AI industry will be structurally turbulent at the top for years. For an early-stage founder, that turbulence creates seams — categories that the large labs ignore because they don't fit the ring-of-power worldview.

Elad Gil: 13 observations you won't hear at a conference

Published: April 20, 2026 · Elad Blog

Author: Elad Gil is a serial founder (Color Genomics, Mixer Labs) and early employee at Google. He has backed Stripe, Airbnb, Coinbase, Instacart, and dozens of others as an angel investor and is the author of the High Growth Handbook.

Core argument: Gil's essay is a numbered list of 13 independent observations on AI's current state. They are deliberately raw: "random thoughts," not a unified thesis. The value is the honest investor-operator perspective on dynamics that are too uncomfortable for stage presentations.

Several observations stand out for early-stage founders:

On market size: OpenAI and Anthropic are each at approximately $30 billion revenue run rate — about 0.1% of US GDP each. 3 "AI has grown from roughly zero to 0.25%-0.5% of US GDP in just a few years. If Anthropic and OpenAI hit $100B of revenue by EOY as many think they might, roughly 1% of GDP run rate will be from AI by end of 2026. This is insanely fast." 3

On headcount strategy: Multiple later-stage CEOs told Gil they plan to stop growing headcount rather than do layoffs — even as revenue grows 30–100%. 3 Headcount stays flat or slightly decreases as attrition is not replaced. This is the "AI dividend" showing up in org charts.

On exit timing: Gil argues that most AI companies should consider exiting in the next 12–18 months. 3 His reference point: in the internet era from 1995 to 2001, roughly 2,000 companies went public; only approximately 12–24 survived. "Most AI companies, including those that are ramping revenue today, will see the market, competition, and adoption, turn on them." 3

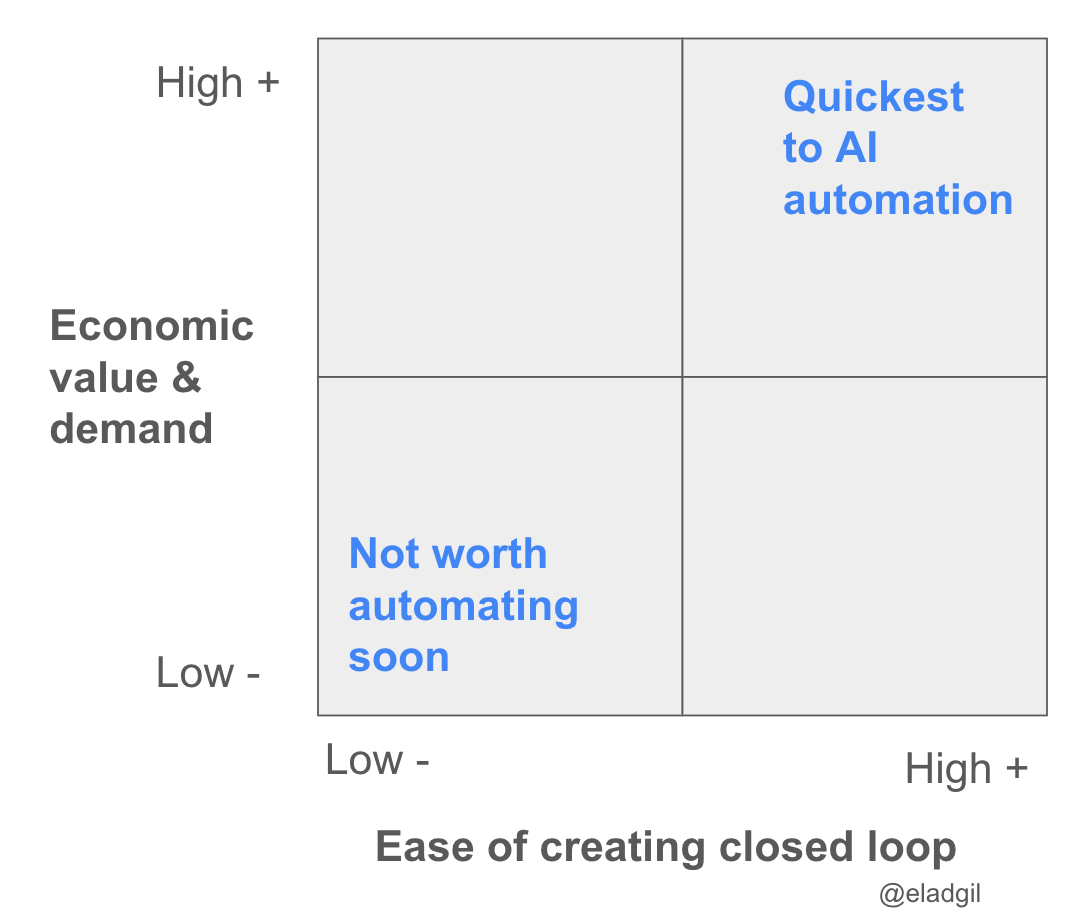

On where automation happens first: Gil maps AI automation risk across a 2×2 matrix using "ease of creating closed loop" versus "economic value and demand." High value, easy closed loop (software engineering, AI research) gets automated first. 3

What this means for your decisions:

Gil's exit timing argument is uncomfortable but worth taking seriously. If you're building in a category where the market could shift dramatically in two years — and most AI categories qualify — then the question "when should I sell?" is a real strategic variable, not a sign of low ambition. The closed-loop matrix is also a useful planning tool: if your product is in a category where the output is easily testable and the economic stakes are high, you are directly in the path of automation. That's not necessarily bad — it means you're building something genuinely valuable — but it means the competitive pressure will arrive faster and harder than in categories with fuzzy outputs.

Andy Jassy: the straight line was a lie

Published: April 9, 2026 · Amazon 2025 Letter to Shareholders

Author: Andy Jassy is CEO of Amazon, which he joined in 1997 and led from the founding of AWS in 2006 until becoming overall CEO in 2021. Amazon's current annual revenue exceeds $600 billion.

Core argument: Jassy opens his letter by invoking a New Zealand band whose latest album is titled Straight Line Was a Lie — because most long-term endeavors don't follow a linear path up and to the right. 4 He uses his own career and AWS's 18-year arc to argue that the ability to go back to the starting line — and rebuild with better information — is what separates enduring companies from those that get stuck.

The headline numbers are large: AWS's AI revenue run rate surpassed $15 billion in Q1 2026, approximately 260 times larger than where AWS was at the same point after its original commercial launch. 4 Amazon's chip business — Graviton, Trainium, and Nitro — has an annual revenue run rate over $20 billion, growing at triple-digit percentages year over year. 4 Trainium2 is largely sold out; Trainium3 (shipping since early 2026, with 30–40% better price-performance than comparable GPUs) is nearly fully subscribed; Trainium4, approximately 18 months from availability, is already heavily reserved. 4

Amazon plans approximately $200 billion in capital expenditure for 2026. 4 Jassy is explicit that this is not a speculative bet: OpenAI alone has signed commitments exceeding $100 billion, with additional customer agreements completed or near completion. "We are willing to make large capex investments and endure short-term FCF headwinds for the substantial medium to long-term FCF surplus. AI is a once-in-a-lifetime opportunity where the current growth is unprecedented and the future growth even bigger." 4

The strategic principle Jassy draws from Amazon's own history is "2 > 0" — when uncertain which path will drive the desired outcome, pursue parallel paths. 4 He illustrates this with drone delivery, same-day centers, and Amazon Now running simultaneously rather than sequentially.

Image from: CEO Andy Jassy's 2025 Letter to Shareholders

What this means for your decisions:

"Going back to the starting line" is not a failure mode — it's a capability that early-stage companies are uniquely positioned to exercise. The Bedrock team rebuilt its inference engine (Mantle) in 76 days using AI-assisted development. 4 For a startup, this is a direct argument for keeping core architecture revisable and resisting the temptation to lock in early design decisions with long contracts or deep customer commitments. The "2 > 0" principle applies at your scale too: if you're uncertain whether to build for enterprise or self-serve, building two lightweight versions in parallel will tell you more in 60 days than six months of strategic debate.

Alex Karp: AI value lives in the deployment layer, not the model

Published: May 4, 2026 · Palantir Q1 2026 Letter to Shareholders

Author: Alex Karp is CEO and co-founder of Palantir Technologies, the data analytics and AI platform he built with Peter Thiel and others from 2003 onward. Palantir serves both US government and commercial clients.

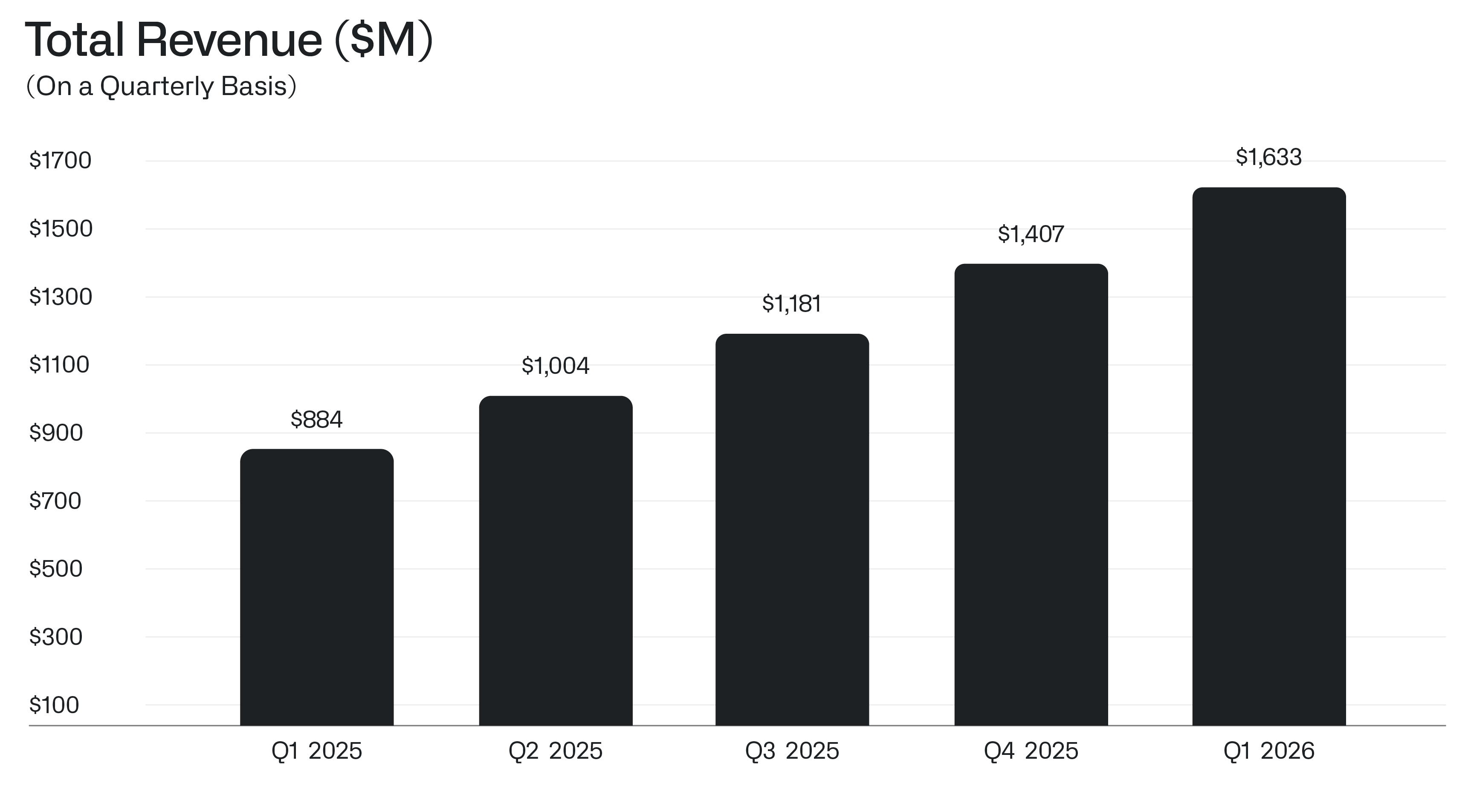

Core argument: Karp's letter announces Q1 revenue of $1.63 billion, 85% year-over-year growth. 5 Q1 profit reached $871 million — more than four times the $214 million earned in Q1 2025. 5 US commercial revenue grew 133% to $595 million. 5 Revenue per employee hit $1.5 million annualized. 5

But the financial performance is context for a product argument. Karp's central claim is that raw AI capability — what he calls "AI slop" — generates no durable value without being grounded in what he calls an "Ontology": the ground truth data, tribal knowledge, and organizational context specific to each customer. 5 "We believe it is not hyperbolic to say that nearly all AI workflows that actually create value — especially on the battlefield — are built on Palantir." 5

He borrows Wittgenstein — "And to think one is obeying a rule is not to obey a rule" — and adds an AI gloss: appearing to use AI is not the same as using AI to expose reality. 5 In Karp's framing, model companies are in a race where token costs have dropped a thousandfold and "winners and losers swap places every six months." 5 Palantir's path is different because it is selling AI-powered outcomes on specific institutional problems, not undifferentiated intelligence.

Image from: Q1 2026 | Letter to Shareholders

What this means for your decisions:

Karp's argument applies directly to how you pitch your product's value. If your AI product surfaces answers from a generic model applied to generic inputs, you're selling what Karp calls slop — and the price will compress as models get cheaper. The products that hold price are the ones where the AI is operating on data no competitor has: operational logs, proprietary customer records, institutional processes that took years to accumulate. Early-stage founders often dismiss this because they don't have proprietary data yet. That's precisely the argument for starting with a narrow customer segment where you can acquire deep, specific ground truth quickly rather than building a wide product that stays shallow. The $1.5M revenue per employee figure is also worth benchmarking: if your team is generating significantly less than that per head, the question is whether AI tooling in your own operations is closing the gap.

Packy McCormick: differentiation as a mathematical obligation

Published: May 13, 2026 · Not Boring — Riding the Leopard

Author: Packy McCormick writes Not Boring, a newsletter with 265,556 subscribers focused on business strategy and emerging technology. He also invests in early-stage companies through Not Boring Capital.

Core argument: McCormick's piece is a transcript of a talk he gave at The Mountain event in Los Angeles on May 6, 2026. 6 The framing is philosophical but the stakes are practical: in a world where AI can produce competent outputs at scale, what makes a human — or a company — irreplaceable?

McCormick's answer draws on Claude Shannon (founder of information theory) and his 1948 mathematical proof: information is surprise. 6 A universe of identical entities producing identical outputs contains no information. Differentiation is therefore not just a strategy — it is mathematically how value is created and how the universe generates knowledge about itself. "The meaning of life is to increase the range and depth of experience in the universe." 6

The implication he draws: "Differentiation is a moral obligation. It is also a mathematical one." 6 Shannon's bit-level proof is the bridge between mystical framing and a cold analytical claim.

McCormick also takes the meaning crisis seriously as a strategic variable. A reader's analysis of more than 200 science fiction books found that 59% were about meaning, while only 17% addressed identity. 6 His argument: as AI eliminates material scarcity, the meaning crisis becomes the most urgent unsolved problem — and potentially the largest market. Viktor Frankl's observation applies: "Ever more people today have the means to live but no meaning to live for." 6

The title comes from Joseph Campbell: "to live with godlike composure on the full rush of energy, like Dionysus riding the leopard, without being torn to pieces." 6 The leopard is AI's generative force; the composure is the specifically human contribution.

What this means for your decisions:

McCormick's information-theoretic argument has a concrete product implication: generic AI outputs are entropy-free — they are the expected, the predictable, the least surprising. If your product generates outputs that could have come from anyone using any model, you are building zero information. The founder who thinks carefully about this question is not asking "how do I make my AI better?" but "what is the irreducibly specific thing I can contribute that no model trained on the average of human output can replicate?" That might be your taste, your domain expertise, your access to a specific community, or your interpretation of data no one else has collected. The meaning crisis framing also flags a real market opportunity: if material needs are increasingly satisfied by AI, the products that address purpose, belonging, and experience are going to command premium positioning.

Mario Gabriele: what a personal AI operating system actually looks like

Published: April 23, 2026 · The Generalist — The Writer-Researcher's Guide to Claude Code

Author: Mario Gabriele is founder of The Generalist, a newsletter on technology and business with a six-figure paid subscriber base, and a Partner at Hummingbird, an early-stage venture fund.

Core argument: Gabriele describes in technical detail the personal AI system he built using Claude Code (specifically Opus 4.5, Anthropic's late-2025 model release). 7 His framing is direct: before Opus 4.5, he had opened his terminal "on a handful of occasions, mostly by accident." 7 After building his system, he spends more than 70% of his working hours there.

The system he describes — which he calls Delphi — has three components: 7

- Library: 45,000+ searchable chunks from all Generalist articles, podcast transcripts, Obsidian notes, Readwise highlights, Google Drive files, and desktop documents, indexed using three fused search layers: vector search (Voyage-3 embeddings), keyword search (SQLite FTS5), and a locally fine-tuned cross-encoder reranker distilled from Cohere and trained on approximately 40,000 query-passage pairs.

- Researchers: A multi-agent system that dispatches specialized agents to scan the internal corpus, read new articles, find podcast appearances, and retrieve paywalled content via a headless browser — using Jina, Firecrawl, and YouTube transcript extraction.

- Interface: A custom terminal-based interface shaped to Gabriele's own workflows rather than a general-population app.

The result, he writes, is "a system that increasingly feels not just like an extra employee, but closer to 20." 7 The specific gain is not that the system does his thinking — it is that it removes the logistics: "the attention casino of the modern web," the tab-switching, the search friction. "I had always hoped The Generalist would grow large enough for it to make sense for me to hire such a research assistant; now, I have been granted a dozen, each with a very strong grasp on what I am likely to find most relevant." 7

What this means for your decisions:

Gabriele's architecture is a working blueprint you can adapt rather than a case study to admire from a distance. Two principles are worth extracting directly. First, the cross-encoder reranker trained on your own query-passage pairs is the specific step that makes the library actually useful rather than generically searchable — it encodes your judgment about relevance, not a model's average. Second, the system's design principle is "how your mind works," not "how the general-population app is built." For a founder, this is an argument for spending a concentrated week building an internal knowledge system shaped around your specific decision-making context rather than adopting whatever AI tool is trending. The investment compounds: every article you read, every customer call you take, every market model you build goes into the retrieval layer rather than dissolving into memory.

Cover image: Roman mosaic from Riding the Leopard by Packy McCormick

围绕这条内容继续补充观点或上下文。