Copilot Billing Overhaul: AI Coding Tools, May 11

GitHub Copilot's switch to AI Credits consumption billing on June 1 is the week's most structurally significant pricing shift. Also: Cursor Bugbot repricing, Claude Code Agent View launch, Amazon Q Developer sunset and Kiro transition, plus Windsurf, Replit, Aider, and Tabnine updates.

Three structural shifts landed this week. GitHub Copilot announced the most consequential pricing change since the product launched — consumption billing replaces flat subscriptions starting June 1. Amazon Q Developer set a sunset date, handing its users to a new product called Kiro. And Cursor, Claude Code, and Copilot each shipped SDK-level infrastructure that turns an "AI coding tool" into a programmable agent platform. The week's individual feature releases were largely good; the pricing and platform signals underneath them deserve careful reading before you renew or recommend anything.

The billing reckoning: Copilot rewrites its pricing contract

Starting June 1, 2026, every GitHub Copilot plan moves from premium-request quotas to GitHub AI Credits — 1 credit = $0.01 USD, billed against actual token consumption 1.

The base fees stay the same: Pro $10/month, Pro+ $39/month, Business $19/user/month, Enterprise $39/user/month. Code completions and Next Edit Suggestions don't consume credits at all — those remain effectively unlimited. What meters now is everything agentic: multi-turn agent sessions, cloud coding agent runs, model API calls above the base tier.

Two details matter for prompt engineers specifically:

No fallback model when credits run out. The old behavior of degrading to a cheaper model when you hit your quota is being removed. When credits are exhausted, agentic features stop. GitHub CPO Mario Rodriguez explained the change as aligning pricing with "the computational demands of agentic workloads," which the flat-fee structure "cannot sustainably absorb." 1

Code Review goes dual-meter. From June 1, each code review run bills both AI Credits (token consumption) and GitHub Actions minutes (on private repositories; public repos stay free). The review workflow runs on GitHub-hosted runners — switching to self-hosted runners avoids the Actions minutes charge 2.

Business and Enterprise plans get a three-month promotional credit buffer: $30/user (Business, up from $19) and $70/user (Enterprise, up from $39).

This announcement compounded an earlier disruption. On April 20, GitHub paused new signups for Pro, Pro+, and Student plans, tightened weekly usage limits, and removed Opus models from the Pro tier 3. The official explanation: agentic workflows changed Copilot's compute profile in ways the original plan structure didn't anticipate. GitHub VP of Product Joe Binder acknowledged: "We've heard your frustrations about usage limits and model availability, and we need to do a better job communicating the guardrails we are adding." Annual subscribers have until May 20 to cancel for a pro-rated refund.

The community reaction was pointed. The HN discussion of the billing announcement drew 767 points and 554 comments. On Reddit, the r/GithubCopilot megathread ran to 152+ comments, with multiple users reporting that the bill-preview tool — promised ahead of the June 1 change — had not appeared in their dashboards as of May 12. One user calculated that certain model multipliers represented close to a 900% effective price increase for annual subscribers using premium models. Multiple threads surfaced migration comparisons: Cursor, Claude Code, Gemini Code Assist (still free-tier), and OpenRouter-backed Continue.dev emerged as the main alternatives discussed.

On the model side, GPT-5.3-Codex becomes the Copilot base model for Business and Enterprise customers on May 17, displacing GPT-4.1 4. It's the first Copilot model with an explicit 12-month LTS guarantee (through February 2027), designed to give enterprise teams stability in the middle of the model churn cycle. Seven models were deprecated or slated for deprecation this week alone: Claude Sonnet 4 (deprecated May 6), GPT-4.1 (deprecated May 7), Grok Code Fast 1 (deprecated May 8), and scheduled removals of GPT-5.2 and GPT-5.2-Codex.

On the VS Code and JetBrains side, the week's feature work continued at pace. VS Code Copilot v1.116–1.119 shipped semantic indexing across all workspaces, a

githubTextSearch tool for cross-repo grep, experimental /chronicle chat history (local DB), BYOK expanded to Business/Enterprise (OpenRouter, Microsoft Foundry, Google, Anthropic, OpenAI, Ollama), and remote browser tab sharing in agent mode 5. The JetBrains plugin added inline agent mode in public preview — agent capabilities embedded directly in the inline chat without switching to a separate panel — plus fine-grained auto-approve controls at the command and file-edit level 6.Copilot CLI shipped v1.0.45 on May 11 with

/autopilot (toggle between interactive and autonomous modes), /fork for session branching, OTel GenAI semantic convention alignment, and ~1.5s faster startup. A previous release (v1.0.43, May 6) patched a security vulnerability — GHSA-9ccr-r5hg-74gf, a remote code execution bug in malicious bare repository handling 7.Cursor's sprint: SDK, security, Teams, and a $60B wildcard

Cursor shipped across several tracks this week.

May 6–7, Cursor 3.3: The most immediately useful release of the week. The May 6 build adds a per-agent context usage breakdown — a ring visualization showing how much context goes to rules, skills, MCPs, and subagents, with diagnostic detail for each. The May 7 build ships a full PR review experience inside the IDE: inline review threads, commit history, and a file-tree changes picker. Build in Parallel identifies independent plan segments and runs them via async subagents simultaneously; Split Changes into PRs slices large agent outputs into reviewable chunks with backup snapshots. Pinned skill pills give one-click access to frequent workflows, and

/multitask brings async parallelization into the editor 8. MCP (Model Context Protocol, an open standard for connecting AI agents to external tools and data sources) connections also got stale token cleanup on re-authentication.May 11, Microsoft Teams: Cursor can now be invoked from Teams channels via

@Cursor. The agent picks the right repository and model from thread context, reads the full conversation, and creates a PR for review. Setup requires the Cursor dashboard integrations page 9.May 11, Bugbot pricing change: The $40/seat/month subscription for Bugbot flips to usage-based billing — average $1.00–$1.50 per PR review, depending on size. The change takes effect at next billing renewal after June 8, 2026 for existing customers; annual subscribers purchased before May 2026 won't see it until their renewal date. Three new effort tiers: Default (0.7 bugs/run on average, 79% resolved at merge), High (0.95 bugs/run, 35% more bugs found at the same ~80% resolution rate), and Custom (natural language rules). Community reaction split: some teams see this as a reasonable pay-for-value model; others flagged that $1–1.50 per review adds up quickly on high-volume codebases 10.

Background: Cursor SDK (April 29) — The public beta of

@cursor/sdk (npm install @cursor/sdk) lets developers build programmatic agents against the same runtime, harness, and models that power the Cursor desktop app. Supports local execution, cloud VMs, and self-hosted workers. Cloud sessions get dedicated VMs with full dev environment, repo cloning, and strong sandboxing; agents continue running when the laptop sleeps. Access to Composer 2, GPT, Claude, and Gemini models. SDK-launched agents surface in the Agents Window and web app. Billing is standard token consumption 11.Background: Security Review beta (April 30) — Two always-on security agents available on Teams and Enterprise plans. The Security Reviewer checks every PR for vulnerabilities, auth regressions, privacy/data-handling risks, agent tool auto-approvals, and prompt injection. The Vulnerability Scanner runs scheduled scans for known CVEs, outdated dependencies, and configuration issues, with optional Slack delivery. Both integrate with existing SAST, SCA, and secrets scanners via MCP servers, and draw from the existing usage pool 12.

The SpaceX deal (April 21, background): SpaceX — which merged with xAI in February — announced an option to acquire Cursor for $60 billion, or pay $10 billion for the partnership work if the acquisition doesn't close. The deal gives Cursor access to xAI's Colossus supercomputer (claimed equivalent of 1 million Nvidia H100 chips) to scale Composer model training. Cursor CEO Michael Truell (25) confirmed on X: "Excited to partner with the SpaceX team to scale up Composer. A meaningful step on our path to build the best place to code with AI." 13 14

Analyst Tim Fernholz (TechCrunch) noted the deal "reveals weaknesses at both companies — neither Cursor nor xAI has proprietary models matching Anthropic and OpenAI's leading offerings, and Cursor still depends on selling access to Claude and GPT models even as those companies compete directly in the developer market." The deal closed the same week that Cursor was simultaneously in talks to raise $2 billion at a $50 billion valuation led by Andreessen Horowitz, with Nvidia and Thrive Capital participating.

Cursor's ARR reached $2 billion as of March 2026, up from $100 million in January 2025 — a 20× trajectory in 14 months 13.

One signal worth flagging: a widely-circulated r/cursor post this week detailed how spec-driven agentic coding is quietly degrading developer review skills. The author reported real-world failures (N+1 queries approved in review, Zod runtime validation added to hot paths, CSRF checks dropped in 400-line diffs) after roughly a year of agent-heavy workflows. Mid-level developers were showing "more vibes, less specific" review comments. The proposed countermeasure — rotating agent-free coding days and mandatory "prior decisions and why" sections in spec docs — reads less like an AI complaint and more like an organizational safety protocol. The post resonated because the failure mode it described isn't hypothetical 15.

Claude Code's expansion week: Agent View, Colossus, and Wall Street

Anthropic had a denser week than any single category covers.

May 11, Claude Code v2.1.139: The headline feature is Agent View (Research Preview). Run

claude agents to open a single panel showing every Claude Code session — running, waiting for input, completed — without separate terminal tabs. The /goal command lets you set completion conditions and have Claude work across turns autonomously until the goal is met, in interactive, -p, or Remote Control mode. The release also adds /scroll-speed, hooks args: string[] exec form with continueOnBlock, CLAUDE_PROJECT_DIR for MCP stdio servers, and 40+ bug fixes covering VSCode extension, Windows/WSL2 compatibility, MCP reconnect, and CJK/emoji rendering 16 17.Week 19 (May 4–8) shipped v2.1.128 through v2.1.136: plugin support for

.zip and URL loading, worktree.baseRef, auto mode hard_deny, hooks effort level control, and native PowerShell support on Windows without requiring Git Bash 18.May 6, Colossus 1 deal: Anthropic signed an agreement with SpaceX to use the full capacity of the Colossus 1 data center in Memphis, Tennessee — 300+ MW, 220,000+ NVIDIA GPUs, online within a month. The same day, Anthropic doubled Claude Code's five-hour rate limits across Pro, Max, Team, and Enterprise plans, removed peak-hour throttling on Pro and Max, and significantly raised Opus model API rate limits 19. Elon Musk commented on X: "Everyone I met was highly competent and cared a great deal about doing the right thing. No one set off my evil detector." The deal is notable given Musk had publicly criticized Anthropic in 2024. It also followed Anthropic's earlier infrastructure commitments: 5 GW from Amazon, 5 GW from Google + Broadcom, $30B Azure deal with Microsoft + NVIDIA, and a $50B Fluidstack agreement.

May 5, financial AI agents: Anthropic released 10 pre-built financial AI agent templates as Claude Cowork plugins — Pitch Builder, Meeting Preparer, Earnings Reviewer, Model Builder, Market Researcher, Valuation Reviewer, GL Reconciler, Month-End Closer, Statement Auditor, and KYC Screener — packaged as open-source cookbooks on GitHub (

github.com/anthropics/financial-services) 20. Claude now works directly inside Microsoft Excel, PowerPoint, Word, and Outlook (beta), carrying context across applications. Eight new data connectors went live: Dun & Bradstreet, Fiscal AI, Financial Modeling Prep, Guidepoint, IBISWorld, SS&C Intralinks, Third Bridge, and Verisk. Moody's launched an MCP application embedding 600M+ company credit ratings into Claude.Dario Amodei and Jamie Dimon (JPMorganChase) shared a stage for the first time at a Fortune event. Dimon said he spent 20 minutes with Claude Code over a weekend building a dashboard covering asset swaps and Treasury bid-ask spreads: "It was very accurate about what I wanted." 21 Existing institutional Claude Code customers include Goldman Sachs, Citi, Visa, Citadel, and Walleye Capital, whose CFO reported that 100% of Walleye employees use Claude Code.

May 4, enterprise JV: Anthropic, Blackstone, and Hellman & Friedman each contributed roughly $300 million; Goldman Sachs contributed $150 million; Apollo, General Atlantic, Leonard Green, GIC, and Sequoia Capital also participated, totaling approximately $1.5 billion (WSJ figure, unconfirmed by Anthropic). The stated goal is an AI-native enterprise services company — "forward-deployed engineering operations" that embed Claude into mid-market company operations 21.

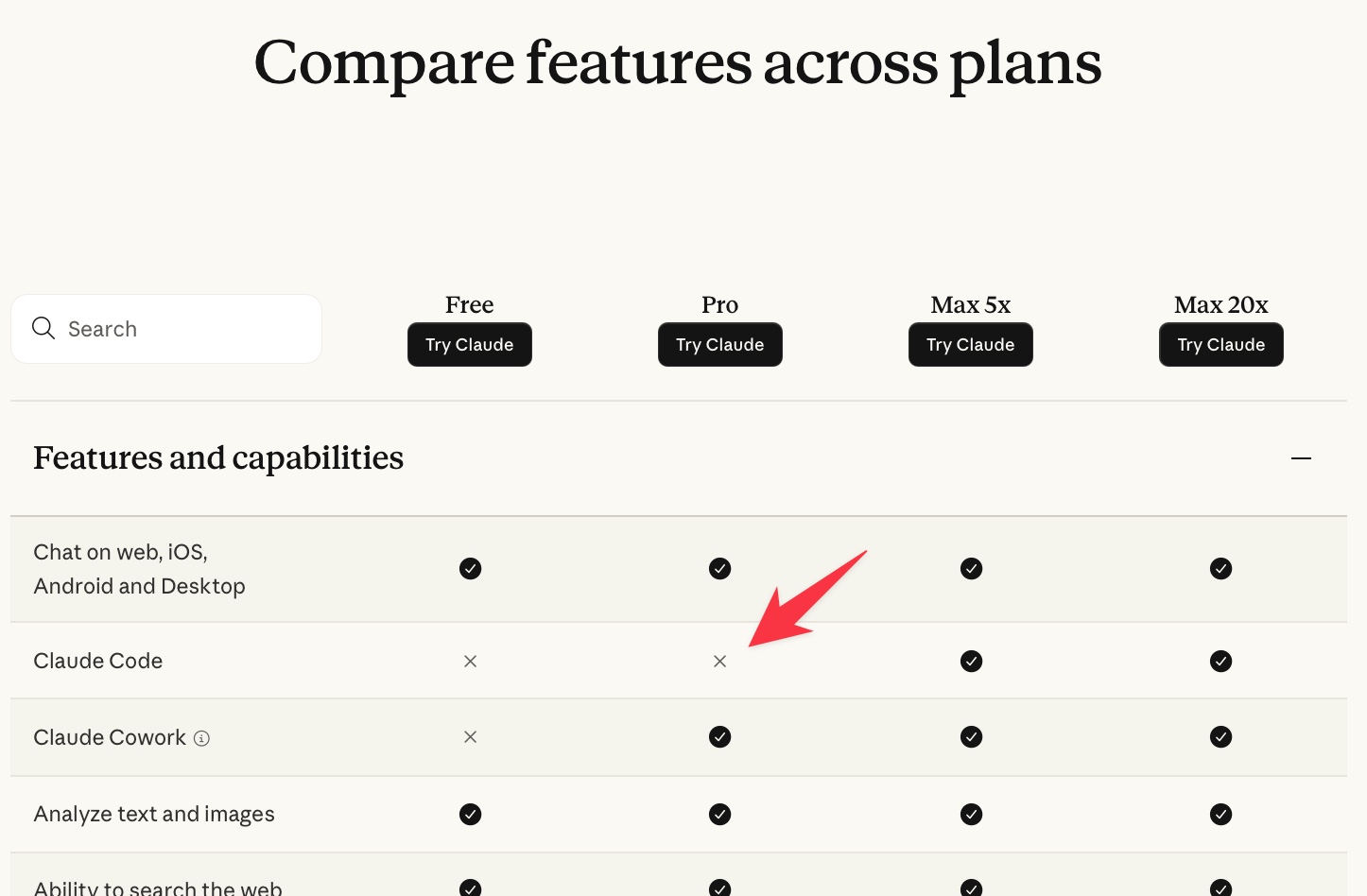

The April 22 pricing episode: Ten days earlier, Anthropic quietly removed Claude Code from the Pro ($20/month) feature list, making it appear Max ($100/month) exclusive. Internet Archive snapshots confirmed the prior-day page still showed Claude Code checked for Pro. Several hours later the page reverted. Anthropic's Head of Growth Amol Avasare explained it as an A/B test shown to approximately 2% of new signups, but that the rollout accidentally updated the main pricing page and documentation. Developer and educator Simon Willison was unconvinced: "I don't buy the '~2% of new prosumer signups' thing, since everyone I've talked to is seeing the new pricing grid." 22 The HN discussion drew 683 points and 642 comments. Claude Code currently remains included in Pro.

Image from Is Claude Code going to cost $100/month?

The real signal isn't whether that test was an accident. Avasare acknowledged that the current plans "were not built for this" — Max was designed for heavy chat usage and agents that run for hours didn't exist at launch. The pricing page incident is Anthropic testing how much friction the market will accept before a repricing. The Colossus deal and rate limit doubling this week may be buying goodwill for when that repricing arrives.

Competitive context worth noting: download data circulating on X this week puts Codex CLI weekly downloads at 17× Claude Code's, with OpenCode already at roughly half of Claude Code's install base. Claude Code is the highest-capability tool in many benchmarks; it's also the most tightly coupled to a single provider's pricing decisions.

The rest of the field

Amazon Q Developer → Kiro. On May 12, AWS announced end-of-support for Amazon Q Developer IDE plugins and paid subscriptions — EOS date April 30, 2027. New signups blocked from May 15, 2026; existing subscriptions can still add users until EOS. Opus 4.6 is removed from Q Developer Pro on May 29. AWS is directing users to Kiro, a spec-driven agentic development environment featuring Specs (structured task definitions), Hooks (event-driven automation), Steering files (persistent codebase instructions), custom subagents, and Powers 23. This is a significant repositioning — Q Developer was a broad assistant product; Kiro is explicitly workflow-opinionated. Teams currently using Q Developer's VS Code or JetBrains plugins should evaluate the migration path before the May 15 new-signup cutoff.

Windsurf (Codeium). Three moves this week and from late April: Devin Review and Quick Review opened to all self-serve users on May 6 (v2.2.17), with a two-week free trial 24. On April 28, Windsurf shipped Devin for Terminal — Devin is Cognition's AI software engineering agent, now available as a Rust-based CLI agent running locally with cloud handoff capability, up to 30% more token-efficient than the Cascade agent, supporting Opus 4.7, GPT-5.5, and SWE-1.6. The April 6 release (v1.9600.38) introduced an adaptive model router that dynamically selects the best model per task, with fixed transparent token pricing ($0.50/1M input, $2.00/1M output, $0.10/1M cache reads) and per-response token counts shown in the model picker. Windsurf has shipped 16+ versions since February, a pace matched only by Claude Code this cycle.

Replit. Security Center 2.0 launched May 7 with bulk CVE remediation across all projects, SBOM generation, dependency scanning triggered on new CVE publication, and per-project "Fix with Agent" that creates patch tasks 25. On May 6, Private Publishing expanded from Pro/Enterprise to Core and Starter plans, with External Access Tokens (HTTP header or URL query parameter auth, with optional expiration) for all private apps 26. App Monitoring (April 29) added email alerts on downtime and let the Replit Agent read production logs and databases (read-only) to diagnose failures 27.

Aider v0.83.0 (May 9). Gemini 2.5 Pro preview (05-06) and Qwen3-235b support added. The OpenRouter model parameter auto-fetching means Aider now pulls

thinking_tokens and reasoning_effort values directly from the provider without manual config. OCaml repo-map support added; Python 3.9 dropped. One detail from the release notes that captures something real: 54% of the code in this release was written by Aider itself 28.Tabnine. The April recap (published May 6) covered Plan Mode in the CLI (preview agent intentions before execution), CLI Extensions for third-party modules, an

/ide command bridging CLI and IDE workflows, Generalist Agent mode for multi-domain tasks, token consumption and cost APIs, and per-team quota enforcement. The April 9 Tabnine 6.1 release added CLI sandboxing with per-command permissions (auto-approve/require-confirm/disable) and workspace-scoped file operation restrictions that block access to /etc/passwd, ~/.ssh, and paths outside the active workspace by default 29 30.Gemini Code Assist released only bug fixes this week (VS Code 2.81.0, May 8). The last feature release was Gemini 3.1 Pro and 3.0 Flash in preview on March 13. Google I/O 2026 is expected to change that — reports point to Gemini 4 and a repositioned "Gemini Agent" product.

Continue (v1.2.22, March 27) remains the most recent stable release — outside the primary window.

Outside the incumbents: Hermes, Vercel Skills, Codex-Spark

Three signals from the ecosystem edge that are moving faster than their install base suggests.

Hermes Agent v0.13.0 "The Tenacity Release" (May 7, Nous Research, 145k GitHub stars). The headline feature is a self-improving learning loop — the agent creates skills from experience and nudges itself to persist knowledge between sessions. It supports the agentskills.io open standard (same as Vercel Skills, making skills cross-compatible), 40+ built-in tools across web search, browser automation, terminal execution, and delegation, seven terminal backends (local, Docker, SSH, Singularity, Modal, Daytona, Vercel Sandbox), and messaging integration across Telegram, Discord, Slack, WhatsApp, Signal, and Email 31.

The r/hermesagent community this week provided concrete benchmarks that Hermes's own docs don't: one user (Suitable-End9642) built a 7-agent autonomous trading system with a 4-tier risk classification, a Telegram approval flow for critical orders, and a GitHub audit trail — total monthly cost including infrastructure: $6–12. Another user (SelectionCalm70) built Odoo ERP integration that lets an agent manage inventory, purchase orders, and accounting through chat: "Feels like agents + ERP systems are going to be a massive use case." Hermes includes a migration tool from OpenClaw (

hermes claw migrate) that imports SOUL.md, memories, skills, and API keys — a direct signal of where the tool positions itself competitively.Vercel Skills / skills.sh (18k GitHub stars, 91k total installs). The

npx skills add <repo> CLI installs SKILL.md-based capability definitions into any of 51+ supported coding agents: Claude Code, Cursor, Codex, Copilot, Windsurf, Gemini CLI, Hermes Agent, OpenClaw, and 40+ others 32 33. Skills use the agentskills.io open standard, making them portable across all compliant agents. The top publishers by install volume are Microsoft (Azure skills: 4.6M+ installs across 14 skills), Anthropic (frontend-design: 396k), and Vercel Labs (vercel-react-best-practices: 390k). The "find-skills" discovery skill has 1.5M installs alone. This is the interoperability layer that makes multi-agent setups tractable across tools — worth tracking before deciding which agent ecosystem to standardize on.Codex-Spark on Cerebras. Codex-Spark is a low-latency variant of OpenAI's Codex coding agent running on Cerebras wafer-scale chips (model ID

gpt-5.3-codex-spark, not available via public API). It grew from roughly 600,000 weekly active users in January 2026 to over 4 million by May. Cerebras has a $20 billion take-or-pay agreement with OpenAI through 2028 and is pursuing an IPO at approximately $40 billion valuation (ticker: CBRS) 34. The strategic picture: Codex's free-tier distribution (available under all paid ChatGPT plans) combined with Cerebras's capacity build is a bet that low-latency, commodity-priced completions at scale can capture market share while Claude Code and Cursor compete on quality. The IPO, if it closes, will be a public test of whether that compute bet prices in.Developer pulse

A few readings from the community this week.

A Hacker News post titled "Software engineering may no longer be a lifetime career" (400 points, 647 comments, May 11) linked to an essay arguing that AI coding tools have changed the career trajectory of software engineers structurally, not just incrementally. It surfaced alongside threads titled "I'm going back to writing code by hand" and "What we lost the last time code got cheap" 35. The concurrent Cursor r/cursor thread on spec-driven skill atrophy gave the HN discussion a concrete empirical anchor.

The Uber anecdote circulating on X this week was blunt: "Uber burned their full 2026 Claude Code budget in four months. This keeps happening: AI tool pricing is usage-based but engineering budgets are annual." That mismatch — consumption-based metering against headcount-based annual budgets — is the quiet operational problem that Copilot's June 1 billing change is going to force into the open for a lot of engineering organizations.

The r/ClaudeCode community's frustration with rate limits converged on a specific confusion: Claude Code's

all-models weekly quota and the Sonnet-only weekly quota are separate meters, but spending the all-models quota blocks access even when the Sonnet-only quota shows 80%+ remaining. Whether or not Anthropic's Colossus deal resolves this, the quota architecture needs clearer documentation.Cover image: color-coded programming environment — Pexels / Marek Prášil

References

- 1GitHub Copilot is moving to usage-based billing

- 2GitHub Copilot code review will start consuming Actions minutes

- 3Changes to GitHub Copilot Individual plans

- 4GPT-5.3-Codex long-term support in GitHub Copilot

- 5GitHub Copilot in VS Code, April releases

- 6Inline agent mode in preview for JetBrains IDEs

- 7Copilot CLI releases

- 8Cursor Changelog

- 9Cursor in Microsoft Teams

- 10Updates to Bugbot for Teams and Individuals

- 11Build programmatic agents with the Cursor SDK

- 12Cursor Security Review

- 13SpaceX is working with Cursor and has an option to buy the startup for $60B

- 14Cursor partners with SpaceX on model training

- 15Spec-driven agentic coding is quietly making us worse

- 16Agent View in Claude Code

- 17Claude Code releases

- 18Claude Code What's New

- 19Higher usage limits for Claude and a compute deal with SpaceX

- 20Agents for financial services

- 21Jamie Dimon and Dario Amodei shared a stage for the first time

- 22Is Claude Code going to cost $100/month?

- 23Amazon Q Developer end-of-support announcement

- 24Windsurf Changelog

- 25Replit Security Center 2.0

- 26Replit private publishing expansion

- 27Replit App Monitoring

- 28Aider releases

- 29Governance in Tabnine 6.1

- 30Tabnine April 2026 recap

- 31Hermes Agent GitHub

- 32Vercel Labs Skills

- 33skills.sh directory

- 34Memory Is the Moat

- 35HN: Software engineering may no longer be a lifetime career

Add more perspectives or context around this content.