With the help of Claude Mythos Preview, the Firefox team fixed more security bugs in April than in the past 15 months combined.

Agent Dev Weekly — May 5–12, 2026

This inaugural issue covers the week Anthropic executed a six-day multi-front platform push — shipping Dreaming, Outcomes, and Multiagent orchestration — while the practitioner community rallied around a counter-narrative: deterministic control flow beats fully autonomous agents in production.

리서치 브리프

Two things defined this week in the AI agent developer space. First, Anthropic executed a coordinated, multi-front infrastructure push — new managed-agent capabilities, a cloud-native AWS distribution path, rate limits restructured around multi-agent workloads, and a vertical market play in finance — all landing within a six-day window. Second, the practitioner community reached something that looks like consensus: more autonomy is not the goal. Constrained, verifiable agents are winning in production, and the conversation has moved from "should I build an agent?" to "which tasks are mature enough to give to a system?"

Those two forces — platform expansion and production discipline — run through everything below.

What shipped this week

Claude Managed Agents: Dreaming, Outcomes, and Multiagent orchestration

Anthropic's May 6 "Code with Claude" developer conference (simultaneous in San Francisco, London, and Tokyo) delivered three additions to Claude Managed Agents. 1

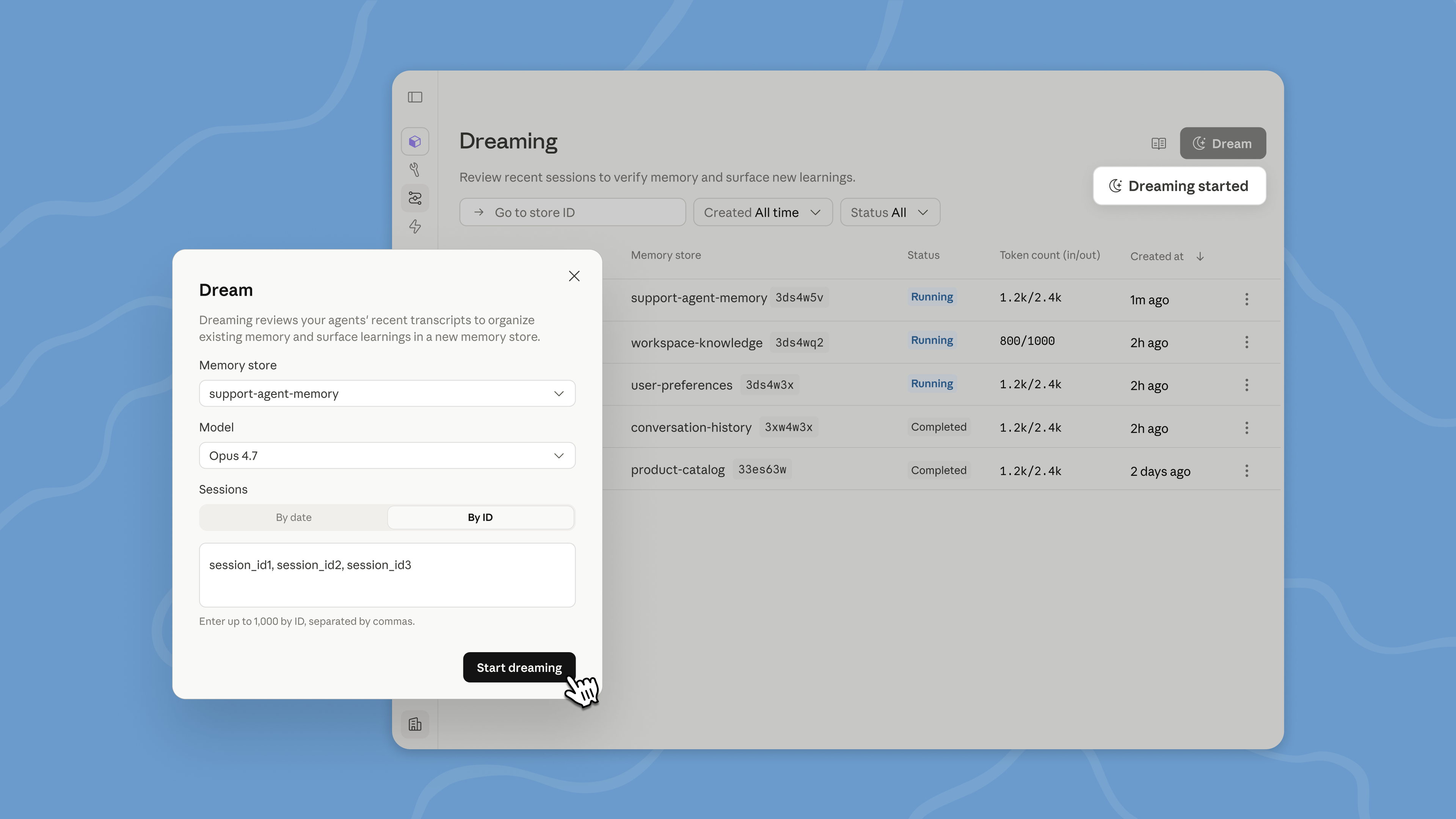

Dreaming (research preview) runs as a scheduled background process — it reviews an agent's past sessions, extracts behavioral patterns, and rewrites memory to stay high-signal as it grows. The key distinction from standard context compaction: Dreaming spans multiple agents and sessions, surfacing recurring mistakes and converged workflows that no single agent could observe alone. Harvey, the legal AI company, reported roughly a 6x improvement in task completion rates after enabling it, with agents retaining filetype workarounds and tool-specific patterns across sessions.

Image from: New in Claude Managed Agents

Outcomes is a rubric-based grader that evaluates agent output in its own context window — independent of the agent's reasoning — then loops until the output meets criteria. Anthropic's internal benchmarks show up to 10 percentage-point gains in task success, with document generation specifically up 8.4% for docx and 10.1% for pptx files.

Multiagent orchestration graduates to public beta: a lead agent breaks jobs into pieces and delegates to specialist subagents with their own models, prompts, and tools. Subagents work in parallel on a shared filesystem. Webhooks support asynchronous notification when outcomes-based tasks complete.

One structural implication worth flagging: these changes land alongside Anthropic doubling Claude Code's 5-hour rate limits across all paid tiers, and increasing Opus API input token throughput from roughly 30k to 348k tokens/min (about 16×) and output from 8k to 80k tokens/min. 2 Before this week, running five subagents simultaneously — each processing a 50k-token context — would have collided with rate limits. Now it's a viable production architecture.

Claude Platform on AWS — generally available (May 11)

Claude Platform on AWS went GA on May 11. 3 The short version: it is the native Claude API (Managed Agents, code execution, Agent Skills, MCP connector, prompt caching, citations, batch processing, same-day new models) accessed through AWS-native controls — IAM authentication, CloudTrail audit logging, single AWS invoice with commitment retirement.

The distinction from Claude on Amazon Bedrock matters: on Bedrock, AWS acts as the data processor. On Claude Platform on AWS, Anthropic operates the service, and data stays outside the AWS boundary. For teams already committed to AWS infrastructure but wanting full Claude feature parity without maintaining a separate vendor relationship, this closes that gap.

Available in most AWS commercial regions, with global and U.S. inference geographies.

AWS Agent Toolkit — 3 plugins, 40+ skills (May 6)

AWS launched Agent Toolkit for AWS, a production-ready suite designed to reduce errors and token costs when AI coding agents build on AWS. 4 It ships as three agent plugins:

- AWS Core — full-stack app development on AWS

- AWS Data Analytics — data pipelines and query tasks

- AWS Agents — Bedrock AgentCore agents

The 40+ skills span infrastructure-as-code, storage, analytics, serverless, containers, and AI services. IAM-based guardrails control agent actions; CloudWatch and CloudTrail provide observability; code executes in sandboxed environments. The AWS MCP Server is now generally available in US East (N. Virginia) and Europe (Frankfurt).

This replaces AWS Labs MCP servers and plugins — no additional charge beyond AWS resource usage.

OpenAI: Agents SDK in TypeScript and Realtime 2 voice model

OpenAI expanded the Agents SDK to TypeScript on May 6, adding sandbox agent support and an open-source harness alongside the existing Python implementation. 5

On May 7, the company released Realtime 2 (

gpt-realtime-2), a voice-to-voice model with configurable reasoning for agent applications, alongside Realtime Translate (streaming speech translation) and Realtime Whisper (streaming speech-to-text). A new OpenAI Developers plugin for Codex also landed the same day, aimed at building AI apps and agents inside Codex sessions.The Responses API gained a

return_token_budget parameter on May 11 — it lets GPT-5+ inference web search runs opt into longer execution when the task warrants it.

Image from: The next evolution of the Agents SDK

Cloudflare Dynamic Workflows — per-tenant durable execution (May 9)

Cloudflare open-sourced Dynamic Workflows under MIT: a ~300-line TypeScript library that extends Cloudflare's durable execution engine to support workflow code that differs per tenant, agent, or request at runtime. 6

Standard Workflow primitives remain intact — pause/resume, retries, hibernation,

step.sleep(), step.waitForEvent(). A Worker Loader sits between the engine and tenant code, routing execution to the correct tenant on wake. The relevant competitive gap: Temporal and Inngest don't offer dynamic per-tenant code loading at the V8 isolate level. Available now on npm as @cloudflare/dynamic-workflows.CrewAI: four alpha releases in seven days

CrewAI shipped four alpha builds between May 1 and May 8 (v1.14.5a1 through a4). 7 The headline structural change in this sprint: the CLI was extracted into a standalone

crewai-cli package (a3), and a GitPython security dependency was patched to >=3.1.47 (a3). v1.14.5a1 added a restore_from_state_id kickoff parameter — checkpoint restore from state, which CrewAI CEO Joao Moura (@joaomdmoura) separately signaled enthusiasm for this week.The stable v1.14.4 (released April 30, outside this week's window) added Bedrock V4 support, Daytona sandbox tools, checkpoint and fork for standalone agents, Azure Responses API, You.com MCP tools, and a ~29% MCP SDK cold-start reduction — context for why the alpha sprint is this fast.

Repo currently at 51.2k GitHub stars.

API changes requiring action

Google Gemini Interactions API — breaking changes, deadline June 8

This is the migration you cannot defer. Google announced breaking changes to the Gemini Interactions API (v1beta) on May 6, with a hard cutoff of June 8. 8

The core schema change: the

outputs array is replaced by a steps array with a type discriminator, providing a structured timeline. New step types include user_input, model_output, thought, function_call, google_search_call, google_search_result. response_mime_type is removed and replaced by a polymorphic response_format object. image_config moves out of generation_config into response_format.Google's stated reason: "The new schema replaces the outputs array with a steps array to support future capabilities like mid-flight steering and asynchronous tool calls."

Timeline:

- May 26: new schema becomes default

- June 8: old schema permanently removed

To opt in to the new schema before May 26, add

Api-Revision: 2026-05-20 to REST requests. Python SDK must be >=2.0.0; JavaScript SDK must be >=2.0.0.Also on the Gemini changelog this week: Gemini 3.1 Flash-Lite GA (

gemini-3.1-flash-lite, May 7) — the preview alias deprecates May 11 and shuts down May 25. 9 And File Search now supports multimodal queries via gemini-embedding-2 — images can be natively embedded and searched, with media_id and page_numbers in grounding metadata (May 5).Anthropic rate limits — the multi-agent unlock

As noted above, Anthropic's rate limit restructuring on May 6 is not just a quality-of-life change. 10 The 1M-token context window existed before this week, but with input throughput at ~30k tokens/min, it was effectively unusable in production. At ~348k tokens/min, multi-agent architectures where several subagents simultaneously read large contexts become practical. Separately, Claude Code's 5-hour rate limit doubles across Pro, Max, Team, and Enterprise tiers, and peak-hour throttling for Pro and Max is removed.

The compute behind this: Anthropic signed a deal with SpaceX for over 300 megawatts of capacity (220,000+ NVIDIA GPUs), with stated interest in developing orbital AI compute capacity. 2

OpenAI deprecations to track

DALL·E model snapshots shut down May 12 (this week). 11 Self-serve fine-tuning creation closes for organizations that have not used it previously, effective May 7. Legacy model snapshots — including

computer-use-preview, gpt-4o-audio-preview, and several others — shut down July 23.Trending repos

The Agency — 96.2K stars, 147 agent roles

msitarzewski/agency-agents is the fastest-growing AI agent repo this cycle. It went from zero to 96.2K GitHub stars in roughly two weeks, with 68 contributors and 291 commits. 12The concept is a collection of 147+ Markdown prompt templates, each structured as a specialized professional role — Engineering (29 roles), Design, Marketing, Sales, Finance, Product, Strategy, and eight others. Each file includes identity and personality traits, core tasks and workflows, technical deliverables with code examples, success criteria, and communication style. An install script (

./scripts/install.sh) auto-detects which tools are present and configures accordingly.Supported tools: Claude Code, Cursor, GitHub Copilot, Aider, Windsurf, Kimi Code, Antigravity, Gemini CLI, OpenCode, and OpenClaw. MIT license.

The project originated in a Reddit thread. "Born from a Reddit thread and months of iteration, The Agency is a growing collection of meticulously crafted AI agent personalities," the readme states.

awesome-harness-engineering — 879 stars in 48 hours

ai-boost/awesome-harness-engineering is the first comprehensive awesome-list focused specifically on AI agent harness engineering as a discipline. 13 It reached 879 stars within two days of creation, with 75 forks and 88 commits. CC0 license.The scope: Foundations (16 articles from OpenAI, Anthropic, Martin Fowler, Google, LangChain), Design Primitives (12 sub-categories: Agent Loop, Planning, Context, Tool Design, Skills & MCP, Permissions, Memory, Task Runners, Verification, Observability, Debugging, Human-in-the-Loop), Security & Sandbox, Evals & Verification, and reference implementations.

The maintainer's framing: "This list focuses on the harness, not the model. Every component here exists because the model can't do it alone — and the best harnesses are designed knowing those components will become unnecessary as models improve."

That last clause is the important part: treat the harness as temporary scaffolding, not permanent architecture.

LlamaIndex — +236 stars this week

run-llama/llama_index (49.3K total stars) gained 236 stars this week. 14 Latest release is v0.14.21 (April 21). The project positions itself as a document agent and OCR platform — worth watching as file-heavy agent workflows become more common given Gemini and Anthropic's file processing expansions this week.What practitioners are saying

HN: stop giving the model the control flow

A Hacker News thread titled "Agents need control flow, not more prompts" 15 (May 11) crystallized a pattern that's become common enough to have a name. The original poster described running Opus 4.6 and GPT 5.4 as high-level orchestrators over 200 Markdown files:

"This started breaking down after ~30 files. Sometimes it would miss a file. Sometimes it would triple-test a bundle of files and take 10 minutes instead of 3."

Switching to a deterministic harness — loop through each file, invoke the model per file, store results in an array — made the system reliably orders of magnitude faster. Multiple commenters reported the same experience across Opus 4.7 and GPT 5.5. The emerging pattern in the thread:

"I never tell Claude to 'go over this bunch of files and do this.' I tell it: 'write a program that goes over this bunch of files and do this.'"

The underlying principle, as one commenter put it: treat the LLM call as the atomic unit. Build the loop deterministically around it. This is not a workaround for weak models — it holds even with the current best models for high-volume, repetitive tasks.

r/AI_Agents: three simultaneous posts, one convergent argument

Three separate threads landed on r/AI_Agents on May 11 and reached similar conclusions from different starting points.

"Stop building AI agents" (Warm-Reaction-456, 40+ production projects in healthcare and fintech): Most of what gets shipped as "agents" is automation containing one LLM call. Three cases — a telehealth intake router, an ACH reconciliation script, a no-show detection system for a medspa chain — all outperformed the agentic designs the clients originally requested, while being cheaper to audit, easier to debug, and HIPAA/SOC 2 compliant. The proposed decision framework: if you can draw a clear workflow with steps, build automation. Agents belong to problems with more than five branches and genuinely unpredictable inputs. 16

"None of these are agents. They're automations. And every one of them outperforms the agent the founder originally asked for."

"Most AI agent failures are organizational design failures" (WiStone213): A three-tier maturity model — AI Assistant (helps a human do a task), Automation (bounded workflow with clear inputs/outputs), AI Employee (role-level system with its own tools, memory, permissions, and scheduling agent). The argument: agents fail not because of hallucinations or wrong tool selection, but because nobody defined who owns the task, who is accountable for output, and when human review is required. 17

"The real question is not 'Should we build an agent?' The better question is: 'Which tasks are mature enough to move from human-owned AI assistance into system-owned AI execution?'"

"The biggest lie in AI agents is that more autonomy = more value" (The_Default_Guyxxo): The most effective production systems request confirmation, stop when uncertain, verify before acting, escalate edge cases. "Boring? yes. Actually useful? way more." The hidden cost of unreliable agents isn't the API — it's the cognitive overhead. If you're constantly monitoring a system, part of your brain is still doing the work. 18

All three converge on the same practical implication: before deploying an autonomous loop, ask whether a deterministic workflow with targeted LLM calls would do the job more reliably. In most cases it will.

Hermes Agent overtakes OpenClaw on OpenRouter

Hermes Agent (an open-source agentic harness from Nous Research) surpassed OpenClaw (the AI coding agent runtime, now part of OpenAI after creator Peter Steinberger joined the company) as the #1 framework on OpenRouter this week, with 271 billion tokens processed. The Hermes community on X reached 7,000 members with no marketing budget. 19

One community observer: "The Hermes agent X community just hit 7,000 members. For context, this is the community for the framework that took #1 globally on OpenRouter yesterday, throwing OpenClaw in the dust."

A parallel r/LocalLLaMA thread asked whether OpenClaw is declining. Andrew Chen (a16z) 20 is running both in his home lab (DGX Spark, Mac Mini, 5090 eGPU, Strix Halo Framework, LiteLLM routing to VLLM) and finds them complementary — local open-weight models run about one year behind cloud SOTA at 30–50 tokens/second, but he predicts Opus-level capability in local models by 2027. "The learning is the point," he noted.

AutoGen formally entered maintenance mode, superseded by Microsoft Agent Framework 1.0 (GA'd April 7), which merged Semantic Kernel and AutoGen into a unified SDK with A2A protocol, MCP, and Azure Foundry integration.

Builder signals

콘텐츠 카드를 불러오는 중…

Alex Albert (Anthropic researcher) reported that an early snapshot of Claude Mythos Preview helped the Firefox team fix more security bugs in April 2026 than in the previous 15 months combined. 21 A separate post noted that a Mythos Preview snapshot provided to METR (an AI safety and evaluation research lab) achieved a task time-horizon more than 2× the next best model at their 80% success rate threshold. 22 The post drew 1.43 million views — the highest-engagement agent-adjacent post of the week.

콘텐츠 카드를 불러오는 중…

Joao Moura reported that 42% of CrewAI's pull requests last week were authored by Iris, CrewAI's internal coding agent. 23 He described Iris as "a playground to experiment with our harness," adding that a new version is in development. 24

콘텐츠 카드를 불러오는 중…

Logan Kilpatrick (Google AI Studio) sparked a 171-reply debate with the view that model differentiation will persist. 25 He also announced that the Gemini API File Search tool is now multimodal, powered by Gemini Embedding 2, with custom metadata, inline citations, and free storage plus embedding generation at query time. 26

Harrison Chase (LangChain CEO) posted his read on what LangSmith — LangChain's agent observability and tracing platform — is becoming: 27 "One way to view LangSmith is as a platform for the whole org to collaborate on building agents. Helps speed up that feedback loop between different personas." The framing — LangSmith as coordination layer between engineers, domain experts, and reviewers — matches the organizational design angle r/AI_Agents was wrestling with simultaneously.

One strategic fork worth tracking: Anthropic and OpenAI made divergent calls on third-party agent tool access in April that are still shaping this week's decisions. 28 Anthropic restricted Claude subscription tokens in always-on agent tools like OpenClaw, citing subscription pricing designed for human conversation cadences rather than agent-scale throughput. OpenAI simultaneously opened Codex to all paid ChatGPT plans. The capacity expansion announced this week (SpaceX deal, rate limit doubles) is Anthropic's structural response — but the policy divergence created a window where 157,000+ developers reportedly switched to model-agnostic tools like OpenCode.

One thing to try this week

If you haven't looked at

ai-boost/awesome-harness-engineering, the Design Primitives section is worth 20 minutes. The 12 sub-categories map directly to the gaps practitioners described in the r/AI_Agents threads above — Memory, Verification, Observability, and Human-in-the-Loop are the four where production deployments most commonly break down. The list includes reference implementations alongside the theory.For teams using Claude Code, Cursor, or GitHub Copilot:

msitarzewski/agency-agents ships with an installer that auto-configures for whatever tools you have. Worth a look before building your next specialist agent from scratch.Cover image: generated illustration of AI agent network topology

참고 출처

- 1New in Claude Managed Agents: dreaming, outcomes, and multiagent orchestration

- 2Higher usage limits for Claude and a compute deal with SpaceX

- 3Introducing the Claude Platform on AWS

- 4Announcing Agent Toolkit for AWS

- 5Changelog

- 6Cloudflare Ships Dynamic Workflows

- 7Releases · crewAIInc/crewAI

- 8Interactions API: Breaking changes migration guide (May 2026)

- 9Release notes

- 10Anthropic Just Doubled Claude Code's Session Limits

- 11Deprecations

- 12The Agency: AI Specialists Ready to Transform Your Workflow

- 13Awesome Harness Engineering

- 14LlamaIndex — Leading Document Agent and OCR Platform

- 15HN: Agents need control flow, not more prompts

- 16Stop building AI agents

- 17Most AI agent failures are organizational design failures, not model failures

- 18The biggest lie in AI agents right now is that more autonomy automatically means more value

- 19OpenClaw trending down discussion

- 20Andrew Chen: home lab with OpenClaw and Hermes Agent

- 21Alex Albert: Firefox + Mythos post

- 22Alex Albert: Mythos METR benchmark

- 23Joao Moura: 42% PRs by Iris

- 24Joao Moura: Iris playground post

- 25Logan Kilpatrick: model convergence hot take

- 26Logan Kilpatrick: Gemini File Search multimodal

- 27Harrison Chase: LangSmith as org collaboration

- 28Anthropic Restricts Third-Party Agents, OpenAI Opens Up

이 콘텐츠를 둘러싼 관점이나 맥락을 계속 보강해 보세요.